Google's 'mind-reading' AI can tell what music you listened to based on your brain signals

Artificial intelligence can produce music that sounds similar to tunes people were listening to as they had their brains scanned, a collaborative study from Google and Osaka University shows.

By examining a person's brain activity, artificial intelligence (AI) can produce a song that matches the genre, rhythm, mood and instrumentation of music that the individual recently heard.

Scientists have previously "reconstructed" other sounds from brain activity, such as human speech, bird song and horse whinnies. However, few studies have attempted to recreate music from brain signals.

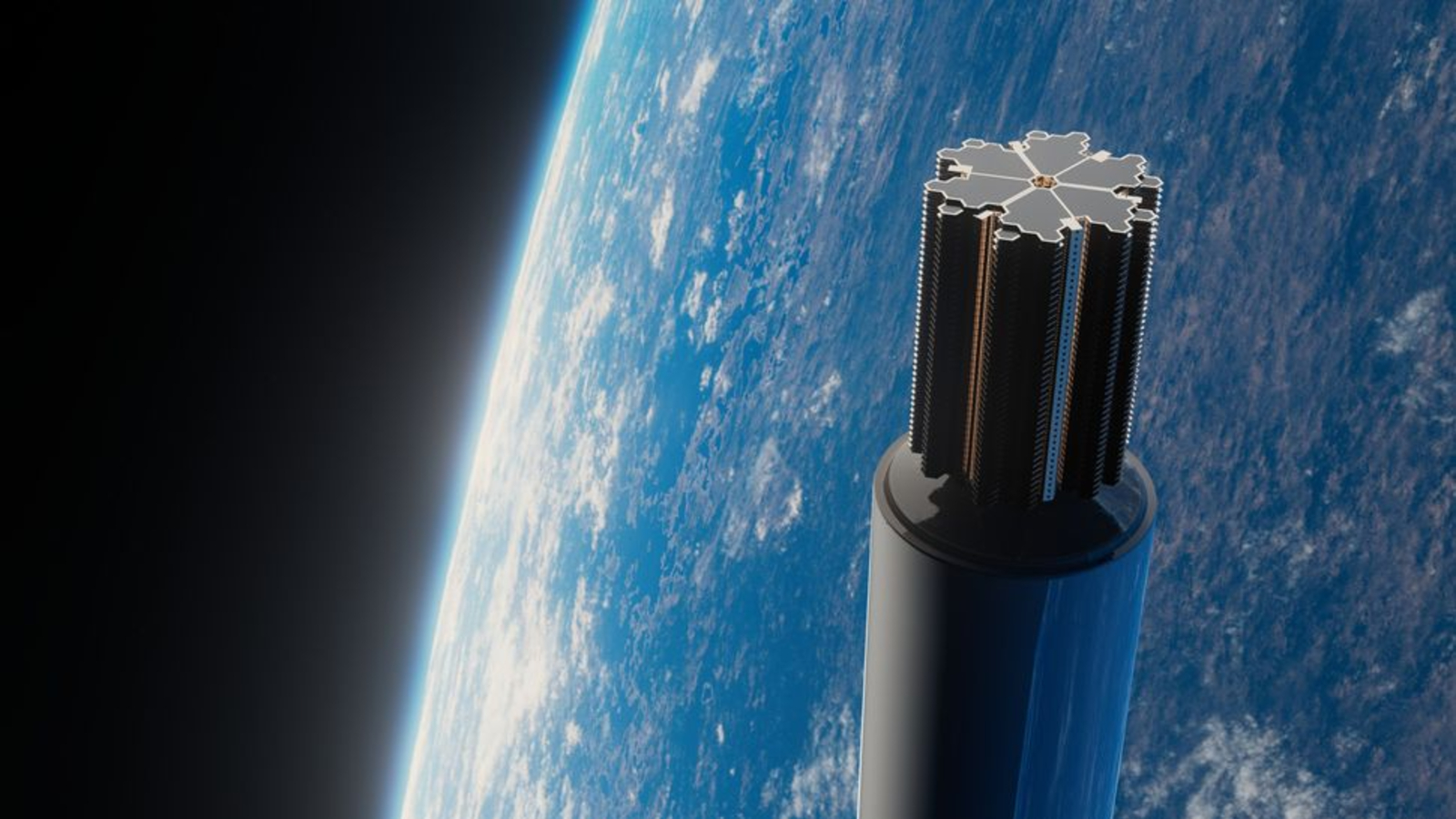

Now, researchers have built an AI-based pipeline, called Brain2Music, that harnesses brain imaging data to generate music that resembles short snippets of songs a person was listening to when their brain was scanned. They described the pipeline in a paper, published July 20 to the preprint database arXiv, which has not yet been peer-reviewed.

The scientists used brain scans that had previously been collected via a technique called functional magnetic resonance imaging (fMRI), which tracks the flow of oxygen-rich blood to the brain to see which regions are most active. The scans were collected from five participants as they listened to 15-second music clips spanning a range of genres, including blues, classical, country, disco, hip-hop, jazz and pop.

Related: Musician's head injury triggered rare synesthesia, causing him to 'see' music

Using a portion of the brain imaging data and song clips, the researchers first trained an AI program to find links between features of the music, including the instruments used and its genre, rhythm and mood, and participants' brain signals. The music's mood was defined by researchers using labels such as happy, sad, tender, exciting, angry or scary.

The AI was customized for each person, drawing links between their unique brain activity patterns and various musical elements.

Sign up for the Live Science daily newsletter now

Get the world’s most fascinating discoveries delivered straight to your inbox.

After being trained on a selection of data, the AI could convert the remaining, previously unseen, brain imaging data into a form that represented musical elements of the original song clips. The researchers then fed this information into another AI model previously developed by Google, called MusicLM. MusicLM was originally developed to generate music from text descriptions, such as "a calming violin melody backed by a distorted guitar riff."

MusicLM used the information to generate musical clips that can be listened to online and fairly accurately resembled the original song snippets — although the AI captured some features of the original tunes much better than others.

"The agreement, in terms of the mood of the reconstructed music and the original music, was around 60%," study co-author Timo Denk, a software engineer at Google in Switzerland, told Live Science. The genre and instrumentation in the reconstructed and original music matched significantly more often than would be expected by chance. Out of all the genres, the AI could most accurately distinguish classical music.

"The method is pretty robust across the five subjects we evaluated," Denk said. "If you take a new person and train a model for them, it's likely that it will also work well."

Ultimately, the aim of this work is to shed light on how the brain processes music, said co-author Yu Takagi, an assistant professor of computational neuroscience and AI at Osaka University in Japan.

As expected, the team found that listening to music activated brain regions in the primary auditory cortex, where signals from the ears are interpreted as sounds. Another region of the brain, called the lateral prefrontal cortex, seems to be important for processing the meaning of songs, but this needs to be confirmed by further research, Takagi said. This region of the brain is also known to be involved in planning and problem-solving.

Interestingly, a past study found that the activity of different parts of the prefrontal cortex dramatically shifts when freestyle rappers improvise.

Future studies could explore how the brain processes music of different genres or moods, Takagi added. The team also hopes to explore whether AI could reconstruct music that people are only imagining in their heads, rather than actually listening to.

Carissa Wong is a freelance reporter who holds a PhD in cancer immunology from Cardiff University, in collaboration with the University of Bristol. She was formerly a staff writer at New Scientist magazine covering health, environment, technology, nature and ancient life, and has also written for MailOnline.