'Crazy idea' memory device could slash AI energy consumption by up to 2,500 times

By performing computations directly inside memory cells, CRAM will dramatically reduce power demands for AI workloads. Scientists claim it's a solution to AI's huge energy consumption.

Researchers have developed a new type of memory device that they say could reduce the energy consumption of artificial intelligence (AI) by at least 1,000.

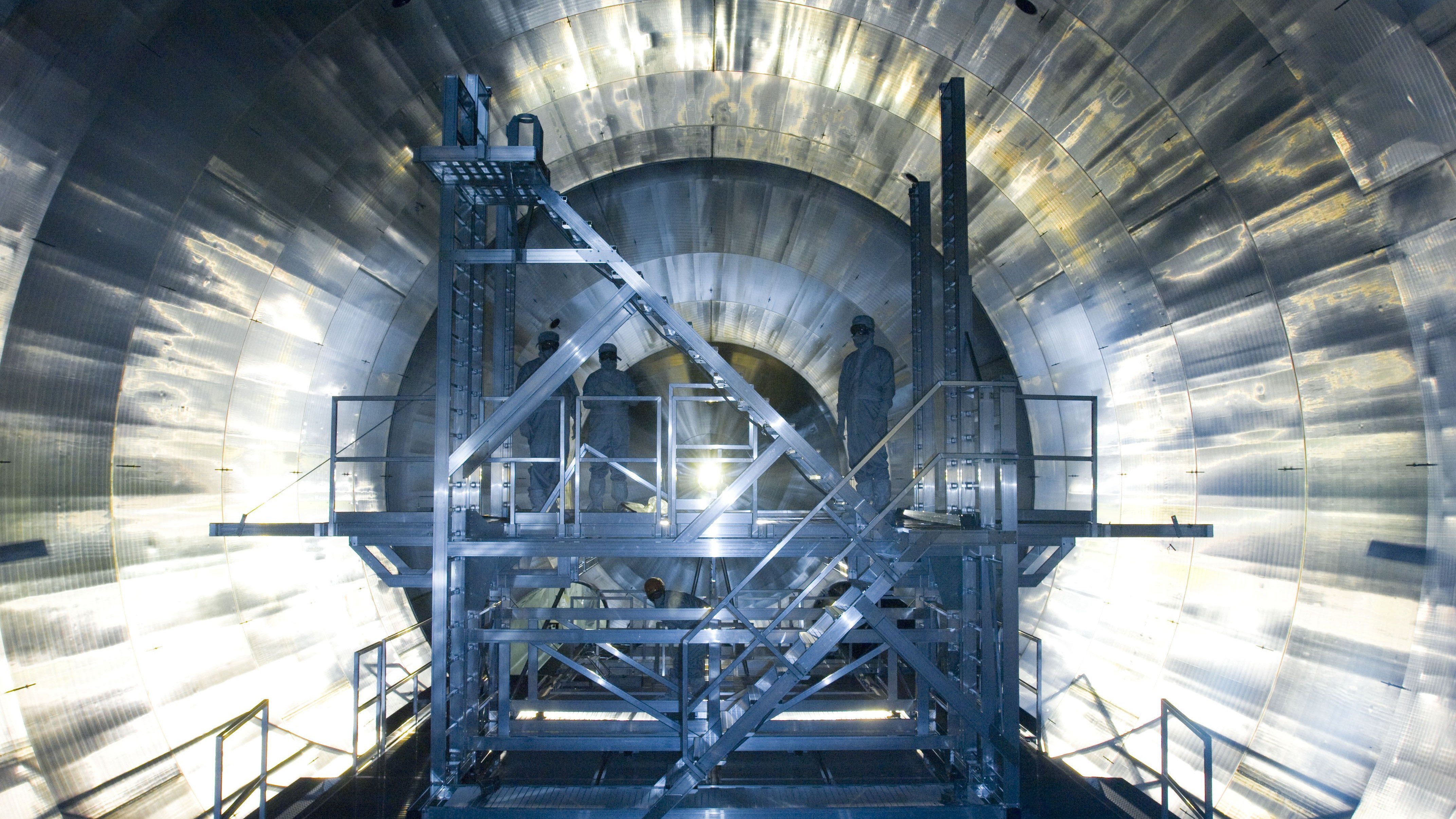

Called computational random-access memory (CRAM), the new device performs computations directly within its memory cells, eliminating the need to transfer data across different parts of a computer.

In traditional computing, data constantly moves between the processor (where data is processed) and the memory (where data is stored) — in most computers this is the RAM module. This process is particularly energy-intensive in AI applications, which typically involve complex computations and massive amounts of data.

According to figures from the International Energy Agency, global energy consumption for AI could double from 460 terawatt-hours (TWh) in 2022 to 1,000 TWh in 2026 — equivalent to Japan’s total electricity consumption.

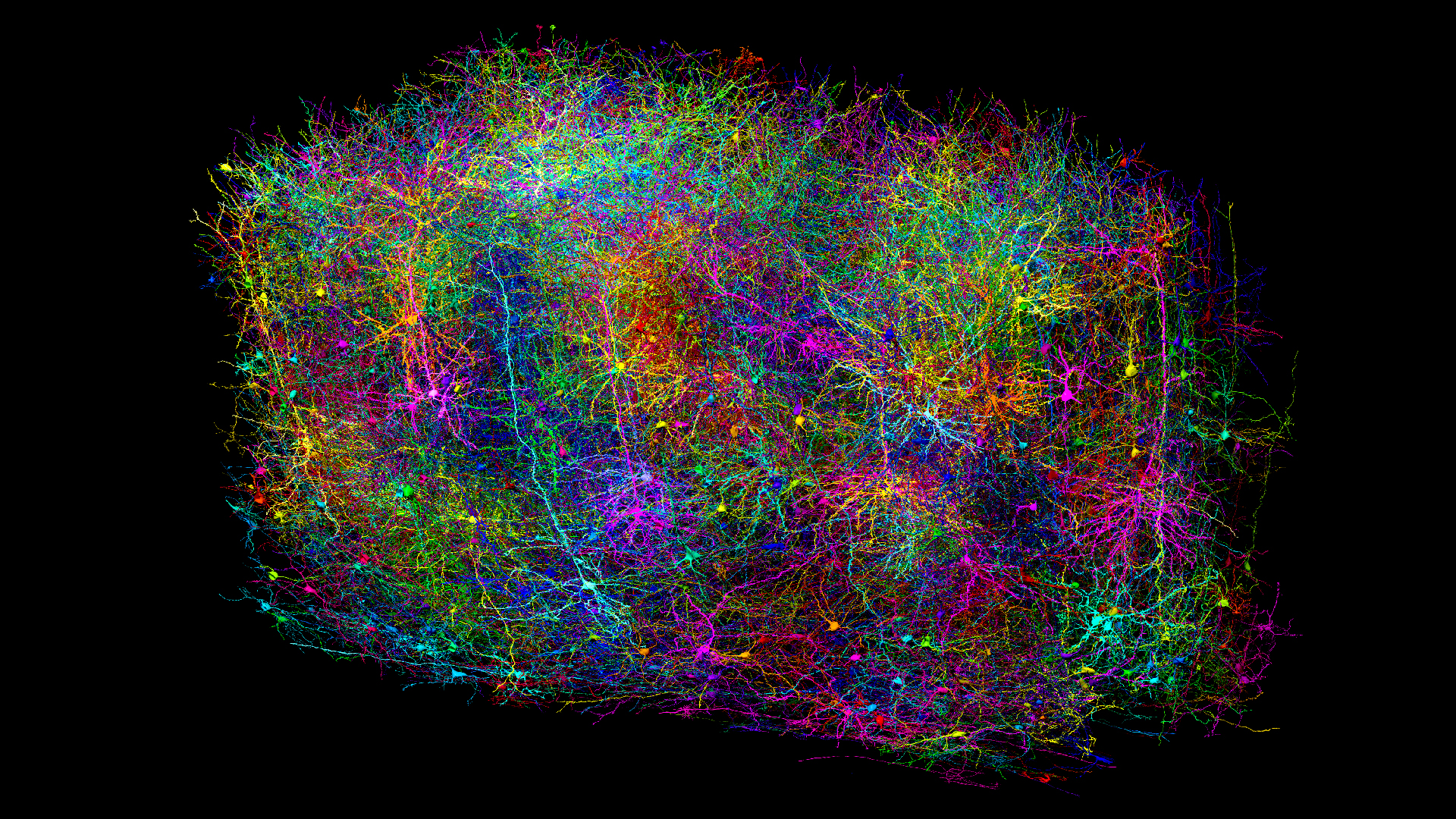

Related: Intel unveils largest-ever AI 'neuromorphic computer' that mimics the human brain

In a peer-reviewed study published July 25 in the journal npj Unconventional Computing, researchers demonstrated that CRAM could perform key AI tasks like scalar addition and matrix multiplication in 434 nanoseconds, using just 0.47 microjoules of energy. This is some 2,500 times less energy compared to conventional memory systems that have separate logic and memory components, the researchers said.

The research, which has been 20 years in the making, received financial backing from the U.S. Defense Advanced Research Projects Agency (DARPA), as well as the National Institute of Standards and Technology, the National Science Foundation and the tech company Cisco.

Sign up for the Live Science daily newsletter now

Get the world’s most fascinating discoveries delivered straight to your inbox.

Jian-Ping Wang, a senior author of the paper and a professor in the University of Minnesota’s department of electrical and computer engineering, said the researchers' proposal to use memory cells for computing was initially deemed "crazy."

"With an evolving group of students since 2003 and a true interdisciplinary faculty team built at the University of Minnesota — from physics, materials science and engineering, computer science and engineering, to modeling and benchmarking, and hardware creation — [we] now have demonstrated that this kind of technology is feasible and is ready to be incorporated into technology," Wang said in a statement.

The most efficient RAM devices typically use four or five transistors to store a single bit of data (either 1 or 0).

CRAM gets its efficiency from something called "magnetic tunnel junctions" (MTJs). An MTJ is a small device that uses the spin of electrons to store data instead of relying on electrical charges, like traditional memory. This makes it faster, more energy-efficient and able to withstand wear and tear better than conventional memory chips like RAM.

CRAM is also adaptable to different AI algorithms, the researchers said, making it a flexible and energy-efficient solution for AI computing.

The focus will now turn to industry, where the research team hopes to demonstrate CRAM on a wider scale and work with semiconductor companies to scale the technology.

Owen Hughes is a freelance writer and editor specializing in data and digital technologies. Previously a senior editor at ZDNET, Owen has been writing about tech for more than a decade, during which time he has covered everything from AI, cybersecurity and supercomputers to programming languages and public sector IT. Owen is particularly interested in the intersection of technology, life and work – in his previous roles at ZDNET and TechRepublic, he wrote extensively about business leadership, digital transformation and the evolving dynamics of remote work.